SECURE CLOUD TRANSFORMATION

THE CIO'S JOURNEY

By Richard Stiennon

Introduction Section 1: Transformation Journey Chapter 1: Mega-Trends Drive Digital Transformation Chapter 2: Moving Applications to the Cloud Chapter 3: From Hub-and-Spoke to Hybrid Networks Chapter 4: Security Transformation Section 2: Practical Considerations Chapter 5: Successfully Deploying Office 365 Chapter 6: A Reference Architecture for Secure Cloud Transformation Chapter 7: Perspectives of Leading Cloud Providers Section 3: The CIO Mandate Chapter 8: The Role of the CIO is Evolving Chapter 9: CIO Journeys Section 4: Getting Started Chapter 10: Creating Business Value Chapter 11: Begin Your Transformation Journey Appendix Contributor Bios Author Bio Read Offline: Open All Chapters in Tabs eBook Free Audiobook

Free Audiobook

Hardcover

Hardcover

Chapter 8

The Role of the CIO is Evolving

“We’ve got to go faster.”

Bruce Lee, Former CIO, Fannie Mae

Adapt or Disrupt

Enterprise digital transformation is upon us and its impact on IT and the business is imminent. As Michael Day, CIO of Cannery Casino Resort states, “Change is inevitable. Change is upon you now, and more is coming. You cannot prevent it, and you cannot slow it down.”12

With that emerges a new kind of IT leader with transformational skills—one that becomes the bridge between the business and IT. More and more enterprises today rely on their CIOs to successfully navigate their organizations through continuous change, making this role more challenging and critical than ever. Historically, the heavy emphasis for the CIO role was placed on technical skills but this is now rapidly changing to encompass a more strategic mandate.

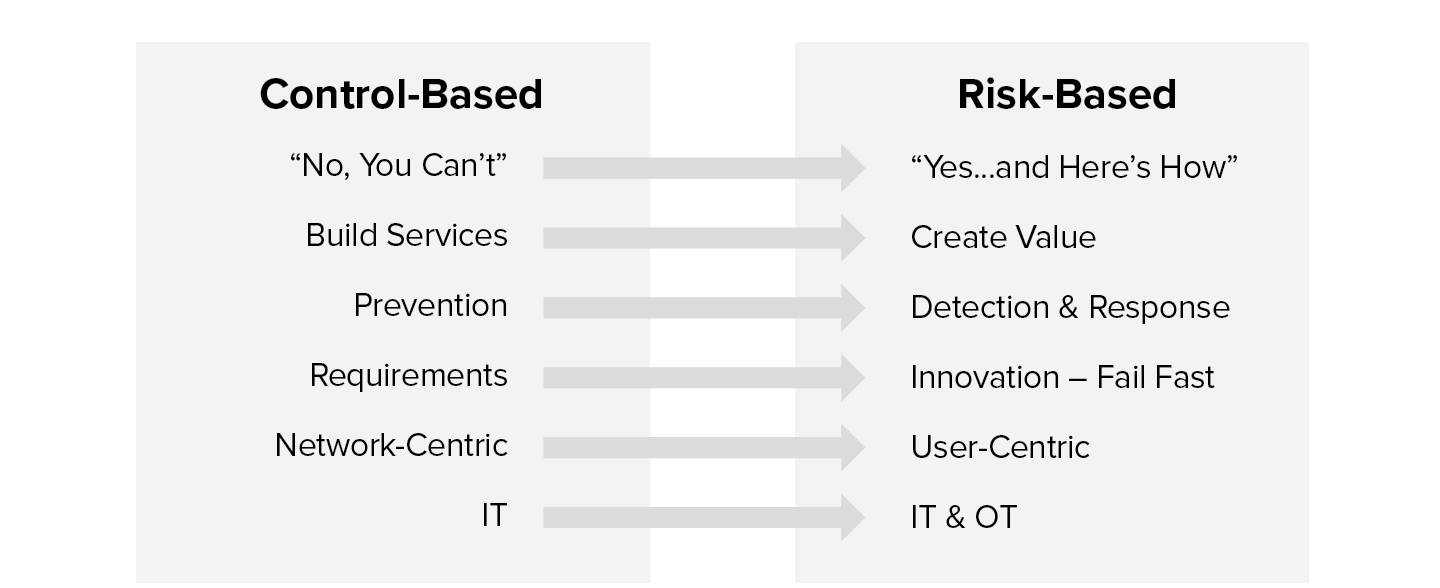

As the C-Suite gears up to out-innovate their competition, CIOs are invariably being positioned as the function most responsible for moving innovation initiatives forward within their organizations.13 To achieve this, the CIO’s mindset must change—from one of controls to one of risk. The illustration below summarizes how IT leaders traditionally controlled all aspects of IT services. With the advent of cloud and mobility, all of that has changed.

Figure 8.1 The change from control-based to risk-based thinking

The role of the CIO now spans a broader mandate, shifting from a delivery executive to business executive, i.e., from controlling costs and re-engineering processes to driving revenue and exploiting data.14 Delivering business growth, driving organization-wide digital transformation, and improving the enterprise’s risk posture with the rapid increase in cybersecurity threats are cited to be some of the top priorities for CIOs today.15

Barriers to Innovation

As we establish the organizational impetus to innovate and the role of the CIO in making this a reality, why aren’t more and more enterprise CIOs committing to this digital journey? The reality is that the majority of CIOs are faced with an array of organizational challenges that become barriers to innovation and impede their ability to make this shift. These barriers can range from existing organizational silos, to legacy processes, to internal cultural resistance to change, to the lack of innovative thinking throughout the organization.16 In many cases, CIOs are being tasked with raising the Titanic with little strategic, digital, and organization support and know-how.

Become a Digital Enabler

CIOs today must face the challenge of embracing disruption proactively and with a carefully formulated game plan, rather than adapting incrementally. As the adage goes, “disrupt or be disrupted.”

The first step to becoming a digital disruptor is to challenge the current mindset and embrace a culture of innovation.

Gartner defines the five key traits of a digital disruptor as follows:17

1. Thrive despite uncertainty. A disruptive digital leader understands and embraces the idea that uncertainty is inevitable. That leader explores what is technologically possible, how changes will disrupt the markets and the risk-reward trade-offs, and establishes a plan that allows for change and evolution.

2. Focus on ideas that leapfrog ahead. All decisions need to be rooted in the end goal or mission. This demands a risk-tolerant mindset and a true digital leader is driven by the challenge and potential for creating net-new business value by harnessing breakthrough technology.

3. Select your digital-era lever. Digital leaders look beyond distractions. Their goal is to become a pioneer and to sustain a long-term investment to secure a position as a leader. They do this by selecting a lever and focus on making it a core competency of the company.

4. Start, experiment, learn, iterate. Digital leaders understand well-grounded strategic bets based on expected business outcomes and digital levers need to be the focus of the company. They take an experiment-driven learning loop approach to inform actions rather than waiting for absolute clarity before proceeding.

5. Innovate faster than others. In a digital era rich in disruption, it’s a given that companies must innovate faster than their competitors. By actively championing and role-modeling a culture of innovation and creativity they encourage risk-taking and discovery across all levels of the organization.

CTO Journey

General Electric Company

Shifting from a Control to a Risk Mindset

Company: | General Electric |

Sector: | Conglomerate |

Driver: | Larry Biagini |

Role: | Former VP & CTO |

Revenue: | $122 billion |

Employees: | 300,000 |

Countries: | 170 |

Locations: | 8,000 |

Company IT Footprint: GE is a global name and has been an icon of technology innovation for well over a century. At the time of writing, there were about 9,000 IT employees and another 15,000 contractors at GE. They maintained an application portfolio of around 8,000 applications, and were distributed across 45,000 compute nodes. Their IT infrastructure was spread across 300,000 employees that sat in 170 countries around the world.

“The modern CIO has to understand where the business is trying to go, because if a business isn’t growing, it’s dying and everyone out there knows that they’re at threat from digital companies.”

Larry Biagini, former Vice President and Chief Technology Officer, General Electric Company

In the pre-cloud world, everything inside the defined corporate network was considered to be good, and everything outside was potentially harmful. So the game was to protect the inside from the potentially bad on the outside. Unfortunately, there is no inside and outside anymore. Larry Biagini was formerly the Vice President and Chief Technology Officer at General Electric. In the next part of this chapter, he shares his perspectives on GE’s cloud transformation journey during his tenure. He also highlights how the C-Suite is evolving, and how the role of the modern CIO is shifting from technology-first to business-first today, requiring them to transition from control-based thinking to a risk-based mindset.

In the words of Larry Biagini:

I retired from GE in 2015 after spending 26 years in various roles ranging from the CIO of a business unit to global CISO, as well as the global CTO. In 2010 it became very obvious to me and others that more and more activity was happening outside our corporate environment than inside. This was beyond activities like personal web browsing; we were doing more and more business over the internet. We were using software as a service applications to actually make our business more efficient via interactions with our suppliers and our customers.

We also had product and software engineers putting stuff in AWS or Azure to quickly try things out and so it became very clear that we had to re-evaluate how we were managing security to protect our environment. The old model was that everything inside was good, everything outside was potentially bad. So the game was to protect the inside from the potentially bad on the outside. Unfortunately, there was no inside and outside anymore. There were just people using devices on an available network trying to get their jobs done. The more walls we put up and the more security policies we put in place to try to protect our network, the more people found ways around it and the less visibility we had into what they were doing.

Protecting “the network” no longer works

Counterintuitively, by trying to protect the network, we were actually making the corporate network more vulnerable because we couldn’t see what people were doing when they were not on our network.

We couldn’t prevent them 100% of the time from doing certain things that could have security consequences and our users were dissatisfied with the way security was trying to prevent them from getting their jobs done. For example, we had global policies in place that said things like sexual content needs to be blocked. Makes sense, but classification of content is not a science and we had researchers in our healthcare business being denied access to sites that had to do with cancer research. Trying to set up a policy based on where a user was in the network to allow the healthcare folks to look at breast cancer research, which may be misclassified as sexual content, while at the same time not allowing that same thing to happen in our finance business was almost impossible to do.

People were finding ways around it. They’d come in, they’d turn off their Wi-Fi connection and use 4G or LTE, so ultimately we were not doing our job because we were preventing our end users from doing theirs.

User mobility breaks the traditional networking and security paradigm

Our organization was already widely distributed, and we were starting to see that more and more of our people were working remotely—they were out at customer sites, on windmills, and visiting oil rigs. They were out doing their jobs and they were off our network. So this idea of protecting the corporate network soon became deciding to only put the pieces of the network that are so crucial to us behind a perimeter that we will never allow them to be connected to the internet, and treating everybody as if they’re on an open network connection—treat it like an untrusted network. Our goal was to protect our users no matter where they were and that’s when we started thinking about simply moving our proxy into the cloud. The proxy acted as a security gateway between our internal corporate network and the internet.

User mobility necessitates change and one of the first things we did was move our proxies and gateways to the cloud.

Cloud security enables user-centric policy enforcement

Moving our security gateway into the cloud gave us one clear benefit. Now we could actually tie policies, both a security policy and a compliance policy, to an individual user no matter where they were in the world.

“Now we could actually tie policies, both a security policy and a compliance policy, to an individual user no matter where they were in the world.”

Regardless of the network they were on, the user would always get the same experience. It wasn’t dependent upon whether you were sitting in Atlanta, New York, or San Ramon, California. Once the security gateway was in the cloud and the policy followed the user, we had happier users.

Delivering a rich user experience while maintaining visibility

So the first big win for us was user satisfaction. The ability to deliver a consistent user experience—both from a performance standpoint, and a policy standpoint. And that made a big difference in the way that our users thought about our security team.

From a security perspective we now had visibility into what everybody was doing wherever they were. There was no concept of on-net or off-net anymore. We could see if the user was home, at a Starbucks, or in the office. We could apply a policy but we could also get security visibility into what they were doing. So the chances of a user being off-net, getting infected because they weren’t protected by the network controls that we had in place and then coming back on-net and causing a problem went down drastically.

If we can kick everybody off the corporate network and they’re going to the internet through a cloud security gateway, that’s fine, as we’re protected and can apply compliance policies. But the reality is that the user has to get back onto the corporate network to run applications that are in our data center. The traditional solution was a network VPN connection, but that broke our model. If we allowed them to come back on the network via VPN, we were opening our corporate network to whatever evil lay on the other side of the VPN.

We ended up developing our own solution, My Apps, that allowed a user anywhere to run any internal web-based application without being on the corporate network. Basically we validated and authenticated the user and we validated and authenticated the device. And if both of those passed the test and you had a policy that you were able to run that application, you run that application wherever you were.

We were talking to Zscaler, our cloud proxy provider at the time, and we saw great use for it as we were doing acquisitions and divestitures. We were doing an acquisition where we knew that the acquired company was compromised and it would take us years to fix it. We used My Apps to give the acquired company’s users access to GE applications and vice versa—GE people accessed the acquired company’s applications without ever connecting the networks together.

This was a time saver, a money saver, and obviously better security posture for us.

Since then, Zscaler has introduced Zscaler Private Access (ZPA) to do the same. ZPA is much more robust than what we built ourselves and much more integrated in the cloud, but the same premise holds true: you can’t secure a network that allows users on it. Because networks don’t really get attacked, you attack users who have network access. After that it’s pretty much game over. Most organizations have a flat network where once you’re in you can go anywhere. Those who have tried to segment those networks at the network level have failed miserably. I know because we tried as well, and it’s just way too complicated, especially in a large organization.

Connect users to applications not networks

So the solution is really to make sure that the right user on the right device gets access to the right services regardless of the network they’re on. If you can do that you can kick all your users off your corporate network. And you’re 100 times more secure.

If you think about it logically, you don’t own the network because as soon as you connect to the internet, you’ve lost complete control. It doesn’t matter if you’re a two-person shop or 200,000 person shop—the more connections, or any connections, you have gives you a loss of control. If you have users that have things like laptops, or iPads, or iPhones, they’re not always going to be on the network that you want to control.

“Make sure that the right user on the right device gets access to the right services regardless of the network they’re on.”

Your employees are going to be doing business, they’re going to be at risk from infections, ransomware, and things like that. Many organizations only have network security, which means you are secure only in your office and on the corporate network. When users go home, they have no protection, are at risk and get infected with ransomware. They come back the next day, they plug into the corporate network, and that ransomware will now infect the entire corporate network.

Take the same scenario where that person could do his or her job every single day no matter whether they’re at home or at the office, and not be on the network that you care about. They’ll still possibly get infected with ransomware, but if they do, the damage is limited, because the network they’re on is not the corporate network that you care about. It’s the internet. The only thing they can affect is the person sitting next to them at home, but it can’t spread across your internal company network because your internal network never hosts that user.

It’s an enormous shift in thinking but it’s the only shift that makes sense. For everybody who’s trying to secure their entire network from bad things happening, the next question you will need to ask them is how big their exception list is? Because everybody has exceptions. They may say they have a policy that says you can’t do these five things, except for the CEO who has privileges to do so. What you find when you start peeling back the onion is that their network protections are porous, never mind the network being porous. The network protections themselves are porous by design.

If you want to go in front of your board and say that you can prevent 95% of bad things happening to your organization by doing one thing and one thing only, tell them you could turn off accepting external emails into your organization. With just that one thing, you will create an environment where you are so well protected from anything bad happening that they’ll love you immediately. On the other hand, not accepting unknown emails from unknown parties is a terrible business decision.

So, this is the discussion that you have. Why don’t you just block email? And the response will be that you can’t, because people need to communicate with the outside world. Well, the same is true outside of email. People need to communicate with the outside world. They work outside of the organization so they understand that this is the risk that you have to live with, and design your solutions differently.

Make your data center an application destination like a public cloud

We had potential customers who told me they had a plan to get all their applications into the cloud by 2020. My response to them was that this just wasn’t going to happen. It just doesn’t make business sense to move all your applications, and if you don’t know it now, you’ll know it when you start to move some of these applications.

“The first step in digital transformation is understanding that what we built for the last 20 years doesn’t apply.”

What’s more important is that your data center becomes part of the cloud infrastructure and treat your own applications as cloud applications whether you move them or not. By leveraging My Apps, which we built at GE, we were able to turn internal applications into something that looked like a SaaS application without ever moving them to AWS, Google Cloud, or Azure. Those platforms are enablers for certain things, but this doesn’t mean that you can’t transform yourself by continuing to host your own applications.

I get intrigued when people say they’re going to have a hybrid data center. No, you’re going to have a hybrid network. Just turn your data center into a destination for the people that are supposed to use it and you don’t have to do anything else. Now you may want to because it may be more efficient to run certain workloads in a cloud environment or it may become more efficient to rewrite some of your applications so they work better with some of the capabilities that AWS and Azure provide, but the reality is that’s not the first step in digital transformation.

The first step in digital transformation is understanding that what we built for the last 20 years doesn’t apply anymore. Right, wrong, or indifferent, it just doesn’t apply.

Security needs to shift from a control- to risk-based framework

Let’s talk about organizational impact. If you think about it, a security team was always about running and cleaning up the latest mess, and if we suggest that the mess is going away, it leaves an organization wondering why it has a security team.

What they should be worried about is what the risks to their organization are and identifying those risks and making sure they are mitigated appropriately. For instance, we did a risk analysis on our entire organization. We asked the CEOs to explain the risk to their business, because everything can’t be protected but we do want to protect what’s most important. Pretty much everybody came back with intellectual property as the number one risk.

You know what? The intellectual property in a washing machine or a light bulb has a lifespan until the day you ship it to Home Depot or Lowes. Then it’s out there and can be completely copied by anyone. Some of the intellectual property in an aircraft engine will decide whether you’re in the engine business for the next 20 years or not. Yes, both assets are valuable intellectual property and we would like to protect them, but where are we going to really spend our effort? Not on figuring out how not to lose a sock in a dryer, but how not to allow competitors to take that one sliver of technology that we think is going to differentiate us over the next 20 years in aviation.

The security people must turn into people who understand risk—understand where their highest risks are and put their mitigations in place that allow those highest risks to not actually occur. In our organization we called them “crown jewels.” They were so important to the organization that we were going to put so many controls on them, and invariably impact the productivity of the people who needed access.

We made sure that when users were accessing those systems, or that data, or those services, they had no email access and they were not connected to the internet. It wasn’t a classified network but we were separating it from the rest of the network, an extra step to ensure that even if something bad happened to the network, it would never impact any other part of the network. And our security team was looking at it 24/7, because it was that important for us.

Developing a proactive risk-based mindset

In the near future, security teams will need to turn into hunters to understand if they are being targeted. They will need to turn into risk leaders to understand where the risks are to your organization. They will also need to turn into knowledge experts when people start to move stuff into cloud services and understand how to implement policies in a secure manner.

Application development: traditional vs cloud-native

The CIOs too have a couple of challenges in this new paradigm. The first challenge is to manage expectations and to guide the conversation about the difference between digital and cloud. Because CEOs and boards hear, “We have to go cloud.” What the CIO has to do is understand how to give the business the tools it needs to get the business growing and that cloud is a part of that strategy, but it is not the only thing.

Second, the modern CIO has to understand the capabilities of his or her organization and most of them will realize quickly that they don’t have the right talent in place to make this digital transformation. They have good people who have been worried about technology for the last 10, 15, 20 years, and what we’re saying here is that the technology is still important but the technology is actually changing. If you’re a network jockey and we decide to shrink the network so it’s not relevant anymore, the role and the need for you is going to change. On the other hand, if you’re a good network admin and you are moving applications off to AWS or Azure or Google Cloud, this will present different problems, so get yourself versed in what those issues are going to be.

If you’re on the application side and think you’re going to write an application the same way you did for the data center and allow it to run well in AWS, you’re fooling yourself. It’s a different skill set. “Lifting and Shifting” applications from on-premises to the cloud more often than not leads to disappointing results. You have to write apps differently. You have to think about them differently, you have to understand interaction between that cloud, other clouds, your users, and your data center. This is not what application people have done traditionally. Application teams get requirements from their functional users, they implement those functional requirements and everyone’s happy.

Developing an in-depth knowledge of the technology stack

But now application teams have to understand much more of the technology stack: the databases, what they’re using, the network connectivity between AWS and maybe the data center, the security protocols, the authentication protocols. Application people never had to worry about that—they always left it up to the infrastructure group because the infrastructure group managed the infrastructure. But you don’t own the infrastructure anymore. Now what?

My number one tip is to bring in a small team that has done this before. Pick a couple of applications that you think are good candidates for moving out of your data center into the public cloud and let that team do it. Seed some of your developers who know the functionality with that team, let them learn the tools and techniques, let them see how it can be done, why it should be done and in many cases why it shouldn’t be done. I think that gets the whole organization moving in the right direction.

If you show a few successes early on, both from a cost and a functionality standpoint, then you can get application teams, your network teams, and your infrastructure teams to recognize that they were part of that success. It encourages them to want to continue the success, and to know that they are capable to take on their own projects without continuing to hire people from the outside.

The roles of CIO, CTO, and CISO are changing

It’s not just the CIO, it’s the CTO, and the CISO as well. If you look at the three main roles, which are CIO, CTO, and CISO, the CIO shifts from technology first to business first. Understand what your business actually needs, understand what your business wants, understand how your business operates and find the best technology solutions to allow that to happen, whether you own them or not.

The modern CIO has to understand where the business is trying to go, because if a business isn’t growing, it’s dying, and everyone out there knows that they’re at threat from digital companies. He or she has to also understand what the company’s doing, where its threats are, where its opportunities are, whether there is any white space that this new digitally connected world can allow them to take advantage of.

The CTO has to shift from architecting corporate networks to embracing the fact that you can’t control everything, but you should know your users, you should know your devices, and you can control what they access. Don’t think you have to build it because you can’t. Don’t think that your solutions are the only solutions that people are going to use.

And the CISO has to shift from security and controls to risk and enablement. If you could look at Salesforce today, it was first introduced in the organization not by IT, but by Sales. Why? Because it filled a need that IT couldn’t address. And it was all done under the radar and then IT stepped in and said, look we’re embracing cloud because we’re using Salesforce. If it were up to most IT organizations they would’ve said no, we can build it ourselves, just give us the requirements.

And take a step back and think about any organization today. If you’re able to build an HR system from scratch would any organization say, “Yes, I want to do that?” Absolutely not. If you had to build a CRM system, would you do it? The answer is absolutely not. If you had to build an expense system would you do it? Absolutely not.

We have large organizations out there who are running their own internal systems that need to start asking themselves the question of when is the appropriate time to tip the balance, to take the internal HR system that has been customized to hell and turn it over to Workday, for example? When is the appropriate time to take the manufacturing system and move it into NetSuite? It may not be today, but if you’re not asking a question every single day, you’re going to miss that point where you should’ve said, “Now’s the time to do it.”

“The CISO has to shift from security and controls to risk and enablement.”

The CIO has to educate functional users that they are not going to get every bell and whistle they want and that they had. But instead, they get speed, they get accessibility, they get lower cost, and they get faster functionality introduction.

Chapter 8 Takeaways

In the new world of cloud, CIOs are no longer providers of IT services, they are enablers of business services—the services that can make businesses more competitive and agile. Review the following list of the new ways a CIO, CTO, CDO, or a CISO should be thinking:

The new CIO/CTO/CDO:

- Focus on growth. Go fast—speed is the new currency

- Move from an IT shop to a “Digital Enabler”

- Be honest with your board about technology debt

- Address your legacy environment head-on

- Embrace the cloud, but thoughtfully for the right applications

- The internet is the new corporate network. Transform your legacy network to a direct-to-cloud architecture.

The new CISO:

- Stop talking security with your board. Focus on risk.

- Create a risk assessment and risk appetite so that the business has a means to make decisions

- Separate your critical assets from the consumers of those assets (don’t put users and servers on the same network)

- Get identity right. Invest in identity and access management.

- Securing the network is no longer relevant. Connecting users to their applications securely irrespective of the network should be the goal.