SECURE CLOUD TRANSFORMATION

THE CIO'S JOURNEY

By Richard Stiennon

Introduction Section 1: Transformation Journey Chapter 1: Mega-Trends Drive Digital Transformation Chapter 2: Moving Applications to the Cloud Chapter 3: From Hub-and-Spoke to Hybrid Networks Chapter 4: Security Transformation Section 2: Practical Considerations Chapter 5: Successfully Deploying Office 365 Chapter 6: A Reference Architecture for Secure Cloud Transformation Chapter 7: Perspectives of Leading Cloud Providers Section 3: The CIO Mandate Chapter 8: The Role of the CIO is Evolving Chapter 9: CIO Journeys Section 4: Getting Started Chapter 10: Creating Business Value Chapter 11: Begin Your Transformation Journey Appendix Contributor Bios Author Bio Read Offline: Open All Chapters in Tabs eBook Free Audiobook

Free Audiobook

Hardcover

Hardcover

Chapter 1

Mega-Trends Drive Digital Transformation

“Digital transformation goes well beyond technology to our customer experience, our user experience, and business in general. Last year, we created a digital team that would help us in that digital transformation journey. We took into account sales support, automation, and other projects.”

Hervé Coureil, Chief Digital Officer, Schneider Electric

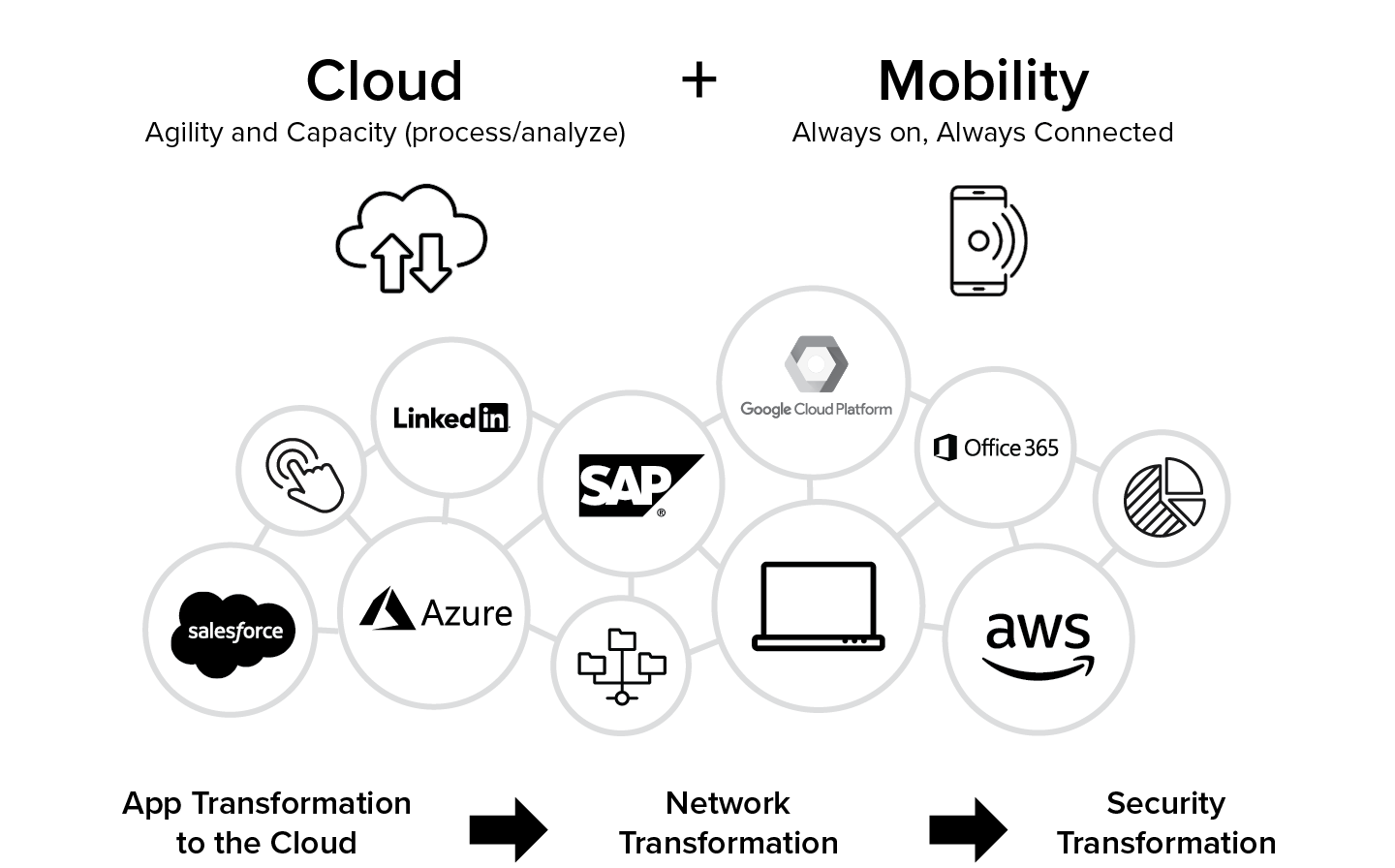

Cloud and mobility create opportunities for growth

Organizations are undergoing a massive shift in their IT strategies. The adoption of cloud applications and infrastructure, the explosion of internet traffic volumes, and the shift to mobile-first computing have enhanced business agility and become a strategic imperative for CIOs. Organizations are embracing these trends to empower business users, increase speed of deployment, create new customer experiences, reengineer business processes, and find new opportunities for growth.

Always on, always connected, and always working has become the new mantra for business. Enabling the same direct-to-cloud experience for mobile employees becomes the logical next step for IT. Today, every user is a power user and should be given direct access to corporate data and applications regardless of where they are—at home, at a coffee shop, at a hotel, or on an airplane.

Mega-trends introduce new challenges for enterprises

The convergence of these new trends creates an infinite number of opportunities for innovation and growth. At the same time, it is difficult for enterprises to embrace these trends with the traditional security architectures because it introduces several key IT challenges:

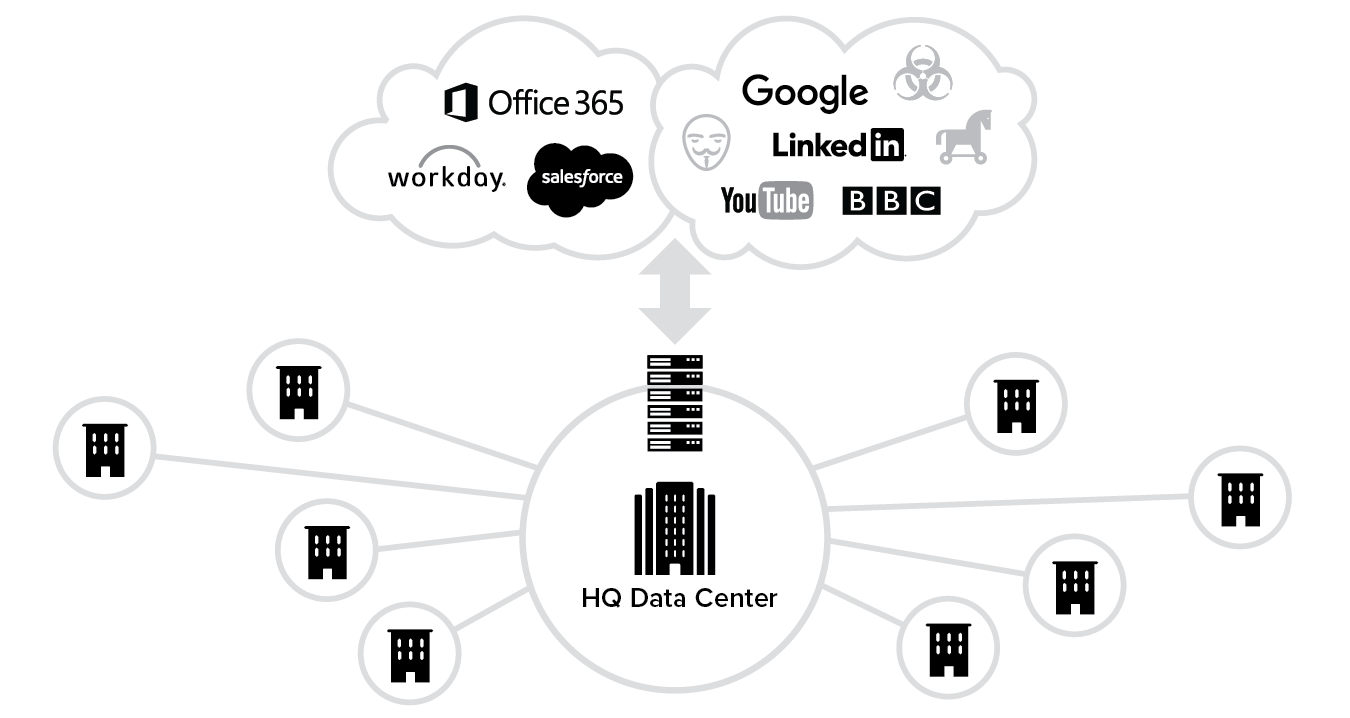

- Growing use of the cloud and the internet creates gaps in security coverage. Enterprise applications are increasingly moving from being hosted in on-premises data centers within the corporate network to SaaS applications hosted in the public cloud. The growing use of the public cloud can significantly increase business risk, as security policies that are consistently applied within the traditional corporate network either cannot be enforced or are easily circumvented in a cloud environment.

- Microsoft Office 365 strains network capacity and data center infrastructure. Unlike other SaaS applications that are used intermittently or by specific departments, Microsoft Office 365 moves many of an organization’s most heavily used applications, such as Exchange and SharePoint, to the cloud, which dramatically increases internet traffic and can potentially overwhelm the existing network and security infrastructure.

- Workforce mobility makes every user a potential source of security vulnerability. The shift towards an increasingly mobile workforce has caused employees to demand easy and fast access to the internet as well as on-premises and cloud applications, regardless of device or location. To permit access for their mobile employees, organizations have typically relied on VPNs (Virtual Private Networks), which grant the user access to the corporate network instead of just the application that is requested. This creates increased points of vulnerability, because a single compromised VPN user can expose the entire corporate network.

These challenges are exacerbated by an increasingly severe cyber threatscape

Today’s sophisticated hackers, motivated by financial, criminal and terrorist objectives, are exploiting the gaps left by existing network security approaches with increasingly sophisticated and evolving threats. The growing dependence on the internet has increased exposure to malicious or compromised websites. According to Mozilla Firefox, over 50% of browser-based internet traffic is encrypted.1 Encryption has become one of the most effective tactics used by hackers to avoid detection by existing appliances. As a result, organizations are more exposed than ever to today’s cyberattacks.

The enterprise now needs to rethink how it protects and secures its most valuable assets—its employees, customers, and partners—from ever-rising breaches and cyberattacks.

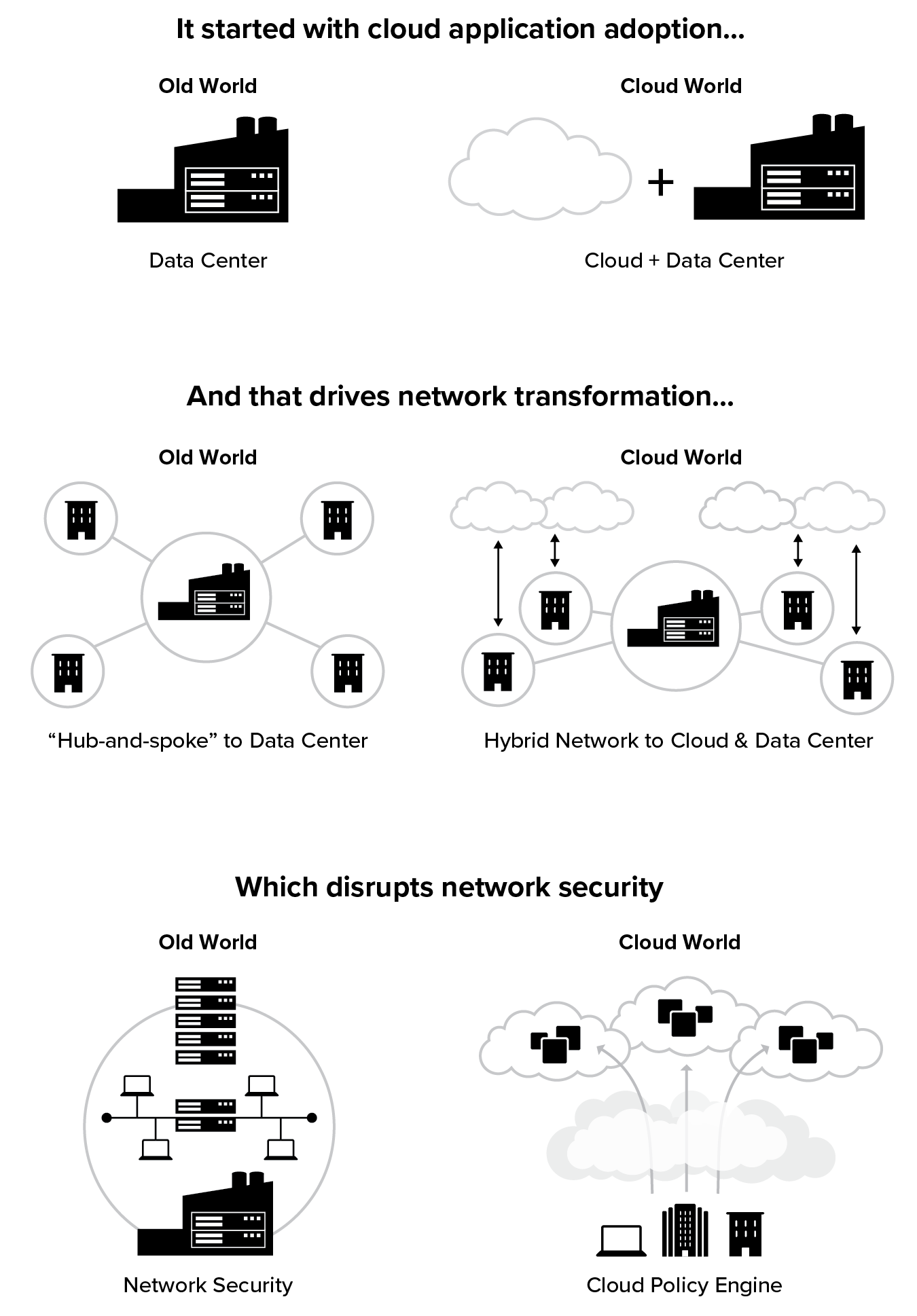

Application Transformation

Organizations are increasingly relying on internet destinations for a range of business activities, adopting new external SaaS applications for critical business functions and moving their internally managed line-of-business applications to the public cloud, or IaaS (infrastructure as a service). For fast and secure access to the internet and applications, enterprises need to be able to securely migrate their applications from the corporate data centers to the cloud, and from their legacy network architectures to modern direct-to-cloud architectures.

This requires a transformation at the application level whereby policies can be set by the organization to securely connect the right user to the right application, regardless of the device, location, or network that user is on:

- Users should be able to securely connect to externally managed applications, including SaaS applications and internet destinations.

- At the same time, authorized users should also have secure and fast access to the internally managed applications hosted in enterprise data centers or the public cloud.

Network Transformation

Enabling fast and secure access to applications and services on the user’s device of choice, whether in the cloud or the data center, regardless of where the user is located, demands a shift in thinking—one that requires a change in the way enterprises view their network topology. As the use of cloud drives up the percentage of corporate traffic that is destined for the internet, it becomes obvious that traditional hub-and-spoke network topologies, where traffic is backhauled from remote offices and mobile employees to the data center before reaching the cloud, is no longer optimal. Why backhaul traffic from hundreds of remote offices to headquarters or the data center before sending it on its way to Office 365? This would be analogous to flying from New York to London via Miami! One CTO measures over 100 terabytes of traffic a month to Office 365 alone.

To mitigate this, companies are engaging in “local internet breakouts” as a critical component of their cloud adoption. The concept is simple: each remote location, instead of connecting back to the data center via expensive MPLS (Multiprotocol Label Switching) circuits, has one or more connections to the internet so that the traffic destined to the internet can be peeled off at the source. This is often called network transformation.

Security Transformation

Because of these application and network transformations, there are security concerns that have had to be addressed along the way. Where data is stored and how it’s secured and accessed are much broader security concerns for cloud adoption. With regards to local internet breakouts, traffic was traditionally backhauled because security controls were anchored in the data center to protect the network. In the new world where traffic is going direct-to-cloud over the internet, you don’t control the network and network security becomes increasingly irrelevant. And in the new direct-to-cloud world, security needs to move to the cloud.

Cloud transformation has led to a re-architecting of security that is disrupting the security industry itself. Not only are these changes creating challenges for the IT department, but threat actors are evolving too. Hackers are beginning to understand underlying business processes and to target the vulnerabilities in money flows and data stores. A new security approach to protect users and applications is needed.

Three areas of secure cloud transformation

As we will learn from the real-world CxO stories shared throughout this book, there are three phases to secure cloud transformation as illustrated in Figure 1.3 below:

- Application transformation: Moving applications to the cloud;

- Network transformation: Evolving from a hub-and-spoke topology to hybrid networks;

- Security transformation: Securely connecting users and devices to their applications regardless of the network they are on.

CIO Journey

General Electric Company

Powering Global IT Transformation

Company: | General Electric |

Sector: | Conglomerate |

Driver: | Jim Fowler |

Role: | Former CIO |

Revenue: | $122 billion |

Employees: | 300,000 |

Countries: | 170 |

Locations: | 8,000 |

Company IT Footprint: General Electric is a global name and has been an icon of technology innovation for well over a century. At the time of writing, there were about 9,000 IT employees and another 15,000 contractors at GE. It maintained an application portfolio of around 8,000 applications distributed across 45,000 compute nodes. Its IT infrastructure was spread across 300,000 employees who sat in 170 countries around the world.

“We had a target to take a billion dollars of technology costs out of our own operations. And we did it! By the end of 2017, we exceeded the billion dollars of productivity, and we figured out how to deliver three billion dollars’ worth of productivity within the same time frame.”

Jim Fowler, Former Chief Information Officer, General Electric Company

General Electric Company (GE) embraced cloud transformation to improve employee productivity while enabling growth, to reduce the complexity and associated costs of its infrastructure, and to ultimately improve the security posture for mobile users. Here is the story of how GE executed its cloud transformation across 8000 global locations.

In the words of Jim Fowler:

I was the group CIO for General Electric until late 2018 and have been with GE for 18 years, having worked in every one of our businesses (apart from our healthcare business) in that time. I’ve done everything from being a systems administrator to a Six Sigma Black Belt focused on process excellence and improvement. Around 2015, I was approached for this CIO position where they said, “Hey, we’re entering into a time of digital transformation. How we run as a company is going to change drastically as we become technically focused. We’d like you to take this job.” This was a significant milestone for us as a company, as none of my predecessors came from IT. They came either from finance or business development. So, I’m the first CIO who has come up from technology inside GE.

The launch of the GE digital software business

Seven years ago, at a request from our CEO, we started looking at what it would take to create a digital software business inside GE. We weren’t thinking about GE internally, but instead about creating software to help our customers get more out of the assets that they run. As we went down that path, we started conceptualizing what a GE software business would look like, and we soon realized, You know what? If we’re going to look at how we help our customers be more digitally integrated into the systems that they run, we better think about how we do the same thing inside our own four walls. And so, we set a target for ourselves: we would find a way to drive out a billion dollars, cumulative, of productivity cost by using technology that we apply inside GE.

We had a target to take a billion dollars of technology costs out of our own operations. And we did it! By the end of 2017, we exceeded the billion dollars of productivity, and we figured out how to deliver three billion dollars’ worth of productivity within the same time frame. We became our own best example of what good digital industry looks like.

A new focus on cloud

The transition to the cloud became important as we realized it was going to take an investment to replicate what we had on-premises in the cloud, and our existing application infrastructure needed to be a lot nimbler. We needed to be able to make changes quickly and spend our resources on application content development rather than infrastructure work like storage and servers.

It started with data center transformation

At that point we had seven data centers around the world that we were housing with our own infrastructure, so we thought: What if we could take the majority of the resources that were in those data centers and get those to be cloud-based? We realized that using the cloud would help us free up the manpower that we needed to drive this new transformational idea.

The cloud strategy we developed was about taking out our own infrastructure from the data center perspective and building upon the idea of reuse versus reinvention. In a cloud-based world, there is a common code set and a common set of software. So we used shared micro-services and common components that allowed us to build applications faster.

In the end, we realized it wasn’t just about freeing up resources. It was about increasing the velocity with which we could build our own code. For every dollar we spend in this “world of the cloud,” we reuse what we already have, and we see a three- to four-dollar greater return. And that is why our evolution into the cloud has become so important to us.

Next, we needed to transform our workforce

Shifting to the cloud meant replacing our outsourced contractors since we had given up a lot of our expertise in hands-on technology over to third parties. We knew that had to change, so for the last two years, 95% of our new hires have been in entry-level positions. In the last twelve months alone, we’ve brought in about 1,500 people in a range of new positions—building code, system administration, database administration, and cloud architecture.

Next, we had to focus on our project managers. They knew how to manage outsourced labor, but they needed development on how to run a product. So, we had to build on their product management skills within the current generation of project managers. They had to understand not just how to run a project plan, but also how to manage product development—pricing a product, understanding cost, and how to make investment decisions on features and functions. It was a big transition for them to learn ways to determine output from the company’s perspective.

We established guard rails to support innovation

Culturally, I would say one of the hardest things to adapt to has been this idea of reuse versus reinvent. We have a 125-year history full of strong innovation and smart engineers. That culture manifests itself as employees trying to reinvent what somebody else has already done because they think they can do it better.

What they don’t realize is that this approach inevitably slows progress, because it takes longer to get to a final solution. We’re trying to focus on improving ideas, rather than inventing new ones or reinventing old ones.

In the past year, we’ve tried to encourage this new focus by implementing what we call a set of guardrails. The guardrails set a minimum standard by which all our developers must operate. But within those guardrails, we welcome and encourage innovation. We like for our people to find newer and better ways to use technology. But when they want to go outside of those guardrails, it requires our chief architects to make an architectural decision to change the guardrails.

Moving towards data protection based on risk tolerance

Changing our network infrastructure was not as hard as we anticipated. We already had a complex network structure because we have over 8,000 different locations that we support. So, instead, we had to focus on data. How do we think about the value of data or the risk of data loss or data manipulation? The answer: we built a data infrastructure on top of the network that protects us from a risk perspective.

For example, we think one percent of our data represents 80% of the risk to the company, and that data sits inside a super-controlled vault of information that is separate from what we consider the rest of the GE network.

We have different classifications of data that determine the level of the network data can reside on from a risk perspective. And once we had that laid out, the networking was really just about a physical design that fit those data requirements. And so, what I always tell people is, don’t worry so much about designing the network first. Design your risk tolerance, first as it relates to the loss or manipulation of data, and that will allow you to define how you think about the network in what is going to be a hybrid cloud environment.

Moving from a hub-and-spoke to a local internet breakout architecture

We have different types of networks. You’ll find our large sites are still using hub-and-spoke networks that come back into a core network architecture that allows connectivity both inside the GE network and out to the GE cloud.

In our smaller locations, we’re disconnecting them from the MPLS networks, and we’re using local internet providers, like Time Warner or Xfinity in the United States, or Orange in Europe, to provide connectivity to the internet. This allows those small sites to be internet-connected back into the GE world, whether that be a cloud-based solution or an internal GE network.

We think of our smaller sites the same way we think about a home office, where it’s internet-connected, and we provide functionality they need. This way the smaller sites can have a secure connection for data and we can manage GE data that sits on the devices in those remote locations rather than try to think about implementing security around the distributed network.

Security is about data, not the network

Our security starts and ends with the definition of the data. We have a strong understanding of the regulatory requirements around how that data is managed. Then we build the enterprise architecture or the physical architecture around that data. So, we design security in from the very beginning of a project based on those discussions. We don’t think about security as a set of requirements or checkboxes. We think about security as features that we designed into the product based on decisions around the data.

We also set guidelines on the secure software development lifecycle that require product managers to include security features based on the data requirements and the transactional data that sits in those systems. Then we build an infrastructure around being able to watch and manage how that data moves over time, based on its criticality.

On the network or off: blurring the lines

Our last CTO Larry Biagini predicted that the lines between what’s inside the GE network and outside the GE network would blur, making it harder to decipher what is inside the corporate network and what is outside the network. Zscaler’s cloud security platform provided us a way to think about how to control data in transit in a world where we didn’t control the network.

“In the past, we were big VPN users, but have decreased our VPN usage by almost 90% since we started this project.”

As a result, we don’t run traditional VPN inside GE anymore. We have a custom-built application, which is built on top of some of the Zscaler connectivity that runs on every device. When you connect to any network anywhere in the world, it determines a) are you on something we control or not and b) does your PC have the level of controls on it that we need to protect our data? If not, let’s put them there, and if yes, then I’m going to give you access to a certain level of information inside the organization.

What’s behind all that is a Zscaler infrastructure that helps us not worry about where somebody might show up to work one day, and it creates that ubiquitous connection between the GE infrastructure and the end user’s PC, while enforcing controls. You can control everything from traditional proxy blocking to starting to build intelligence that says, when Jim Fowler shows up on a PC and is sitting in Atlanta, Georgia, giving him access to these network resources makes sense. But when Jim Fowler shows up with his PC in some country we have concerns with, we’re going to have to restrict his access a little bit more. We’re not necessarily going to give him access, and we’re going to monitor more closely what he’s doing on that device today. And so Zscaler fills a lot of different boxes—it’s not just your traditional proxy provider, but a next-gen network security provider for us. It allows us to manage this extended network of devices that sits out in the GE infrastructure.

In the past, we were big VPN users, but have decreased our VPN usage by almost 90% since we started this project. We are connecting about 3,000 of our smaller locations through a local internet provider versus a high-cost MPLS or dedicated network as we would have had in the past.

In data centers and large locations, we still have dedicated infrastructure, but it’s that small office-type location that we think about very differently than we did ten years ago.

Realizing cost savings and performance improvement

Depending on which country the site is in, we see savings from 30% to 75% in infrastructure costs. This comes from being able to leverage more ubiquitous forms of connecting to the internet versus having dedicated lines and firewalls and routers and switches in those locations.

One of the advantages that we weren’t looking for, but that we gained, was in performance. In the old world, every transaction routed back through a central data center and then was sent back out to the receiving system. In the new world, when we’re connecting via the internet, all of a sudden that network performance goes up. So, wherever we’re using cloud-based solutions, we’ve seen performance improvements with a lot of our transactional applications—as high as 70% improvement in transaction times.

“Wherever we’re using cloud-based solutions, we’ve seen performance improvements.”

When you break down the barriers of everything having to sit in your own network in your own data center it becomes a lot easier to have conversations with customers. About not just how you do that, but also about making the data flow in a secure fashion, so that from a point-to-point perspective, the data is completely secure. Between two different companies, we could actually share data in a more meaningful way than we had in the past. And so, I’d say that lower cost and improved performance were the two big things that we experienced as we went on this journey.

CeO Journey

Cloud Security Alliance

Best Practices for Providing Security Assurance Within Cloud Computing

Organization: | Cloud Security Alliance |

Driver: | Jim Reavis |

Type: | Non-profit |

Role: | Co-founder, CEO |

Organization profile: The Cloud Security Alliance (CSA) is a not-for-profit organization with a mission to promote the use of best practices for providing security assurance within cloud computing, and provide education on the uses of cloud computing to help secure all other forms of computing. CSA harnesses the subject matter expertise of industry practitioners, associations, governments, and its corporate and individual members to offer cloud security-specific research, education, certification, events, and products.

“The need for regulatory compliance probably comes up first for organizations moving to the cloud.”

Jim Reavis, Co-founder & Chief Executive Officer, Cloud Security Alliance

Cloud Security Alliance (CSA) was created in 2008 to get ahead of the security issues it saw coming with the move to the cloud. Jim Reavis, the co-founder and CEO of CSA, shares in this upcoming journey what motivated the formation of this alliance in the early days of cloud adoption, and also the initiatives the alliance continues to drive to create governance and the sharing of best practices within the community and industry.

In the words of Jim Reavis:

In 2008, I was looking for things that were going to impact the IT industry, like virtualization of operating systems. That’s when I started to see that the early adopters I respected were beginning to kick the tires of this thing called cloud.

It became apparent that we were moving to a world where someone armed with a credit card could get access to tremendous computing power to quickly mashup impactful applications.

The problem I anticipated by 2013 was that there would be a lot of cloud options available, but from a security perspective, there would not be a lot of defensible best practices and strategies for cloud adoption, and for understanding the risks in a way that could be communicated to auditors.

This line of thinking started a snowball effect, and after talking to my CISO friends, I realized we really needed to get started on creating a whole cloud security ecosystem.

In 2008, during the financial meltdown, we brought together a group of smart industry people and started creating white papers. We wanted to start from what we understood the cloud was right then, and where it could go.

I took these ideas to a group of industry experts from eBay, Intuit, Qualys, and Zscaler. They provided encouragement and access to their networks to find the resources for all the different areas. And then we went to work on it and, at the RSA Conference on April 21, 2009, we announced the Cloud Security Alliance, with a mission to promote the use of best practices for providing security assurance within cloud computing. We also published Guidance for Critical Areas of Focus in Cloud Computing to set the stage for what the CSA was all about.

It took a lot of people by surprise because they were thinking about the need for the same type of organization. But we put together a pretty complete version 1.0 of our Guidance. And many of those principles are still in the many iterations we’ve done since then. From that point on, we were viewed as an authoritative source.

In 2013, we heard a lot of enterprises tell us that having tools like the Guidance saved millions in IT costs, and how they could make the jump to the cloud sooner, because we had some defensible, vendor-neutral, intellectual tools to help explain to the rest of the ecosystem what we were doing.

It was a community, grassroots effort—a coalition of the willing—that just happened to have more time than they normally would have in that 2008 timeframe. From there, we built on the initial success.

At the CSA, we have a philosophy of making all of our research freely available. Sometimes we don’t even know how enterprises are using what we do or how they’re interacting with it. We see things from a lot of different levels. For example, a large oil and gas company viewed the cloud from a lens of four main pillars: awareness, visibility, opportunism, and strategy.

You are already in the cloud. Most places find they are probably using more cloud than they know.

From credit card projects to the entertainment industry, there are small firms that do everything in the cloud. It’s an important part of the supply chain, but they also may not necessarily have the same technical visibility as a larger company.

So, understanding why people are using the cloud, what they’re using, what problems they’re trying to solve, how they’re trying to transform the business—it’s all key to understanding the impact.

As for opportunism, it’s important to know it’s going to be all cloud in the future in terms of backend data centers. Understanding that, we can find the best ways to move forward on a case-by-case basis, and to use security to solve business problems.

Cloud strategy

Many organizations have settled on a cloud-first strategy. Even the U.S. Federal Government announced in 2011 that it would have a cloud-first strategy.

Strategy is about how to create the organization, technical architecture, culture, communications, and platform. We need to make it much more simple to enable different parts of the business to have what I call a cloud dial-tone.

“Even the U.S. Federal Government announced in 2011 that it would have a cloud-first strategy.”

If you have the right identity federation, and the right policies in place, you can do it your own way.

The cloud security landscape

The need for regulatory compliance probably comes up first for organizations moving to the cloud. The data sovereignty and data protection aspects of it are of particular concern because you don’t necessarily have control of your own data. If you have a global utility that’s overlaid on multiple nation-states with their own regulatory regimes, that makes data sovereignty a paramount issue.

From a technical security perspective, the raw security concerns I see have become more nuanced. We hear from a lot of enterprises that top-tier providers do get it now. They have matured quickly and because of the scope of their platforms, they have visibility into attacks globally.

Cloud service providers have a security responsibility and enterprises need to be able to vet them properly. They need to understand that the cloud isn’t just the top five infrastructure providers, but it’s thousands of other entities that provide services on top of the infrastructure.

CSA engagement

More than any other sector, the financial services sector is participating heavily in the Cloud Security Alliance. This sector tends to be more sophisticated when it comes to IT. They engage with us more directly and want to share best practices and share their pain points with their peers.

We receive many requests to engage with governments on behalf of our members—engage with regulators and provide the sort of vendor-neutral voice to help them understand what we see. We have to explain what the cloud is, where the risks are, and what they need to be thinking about.

It’s exciting times as organizations are trying to become more agile, to be “software-defined” in many aspects of their business.

Identity and federation

With identity and federation, there has been enormous progress. There isn’t as much pain involved in being able to take any new application and have the right way to plug that in with SAML (Security Assertion Markup Language) and other federation schemes.

We haven’t moved as quickly as I had hoped in having pervasive, multi-factor authentication (MFA) everywhere, but it’s certainly in a lot of places, and it’s certainly well understood. Going forward, MFA should extend beyond the idea of the human and the user to make sure that anything that’s an entity, any internet of things (IoT) device, any virtual machine instance, any data store, any application—anything that you can think of that’s a part of this infrastructure—will have an identity. It will have certificates. It will have the ability for us to understand it as a discrete and trusted component so that we can pull things out of it, and we can build a continuous audit trail or whatever else we need based on identity.

Impact on workforce

A big issue we hear about is the retooling of the workforce. Unless you go with some different definition, no one has 20 years’ experience in cloud, and so Global 2000 companies are hiring people fresh out of college and training them. You can’t run your IT organization on newly minted college graduates, but you need a strong infusion of fresh talent.

At the major cloud provider conferences like AWS re:Invent and some of the others, attendees get excited and charged up about what’s possible. It is creating a hunger—but, it’s a lot harder to flip a switch on people than it is on systems. It’s a slog to get people to change. The work is not all in retraining. We need more bite-sized education on how to do this, across the board, for every role in IT.

Better security through automation

Automation has created a different approach to security. The ability to instantiate and decommission compute, virtual machines, or containers can shrink your attack surfaces. Systems don’t degrade with time and vulnerabilities have a much shorter half-life. The traditional ways of very carefully curating servers, like a thoroughbred, are over. Slow regression testing for understanding changes gets replaced by rapid instantiation and decommissioning of systems.

The ability to use automation tools to deploy pristine images decreases the opportunity for vulnerable systems to be attacked. It is so much better than we’ve ever had before.

What not to do on your cloud journey

It’s a new world. Don’t take all of your old approaches with you. The other aspect of all this is that it’s really cloud-native. You can’t assume that there’s any physical choke point that’s bringing everything back to a corporate enterprise perimeter and analyzing things. You have to understand what a true virtual, cloud-native architecture looks like. When you understand that, you can understand how to move to the cloud securely.

Chapter 1 Takeaways

In this chapter we covered the mega-trends that are driving enterprise digital transformation, the emergence of cloud transformation, and some common considerations. At this stage, you should start thinking about the cloud as your data center, or at least a flexible extension of your data center.

In the next section of this book, we will delve deeper into the transformation journey and glean insights from other enterprise IT leaders and their real-world cloud transformation journeys.

Next Chapter ›